(A practical closed-loop control walkthrough using Node-RED, MQTT, and DeepSeek LLM.)

We believe the next generation of industrial systems might not just react — they might think, act, and learn.

Not just retrieving reference data like RAG, offering suggestions like a chatbot, or passively answering questions — but actively executing decisions and closing control loops like a human expert would.

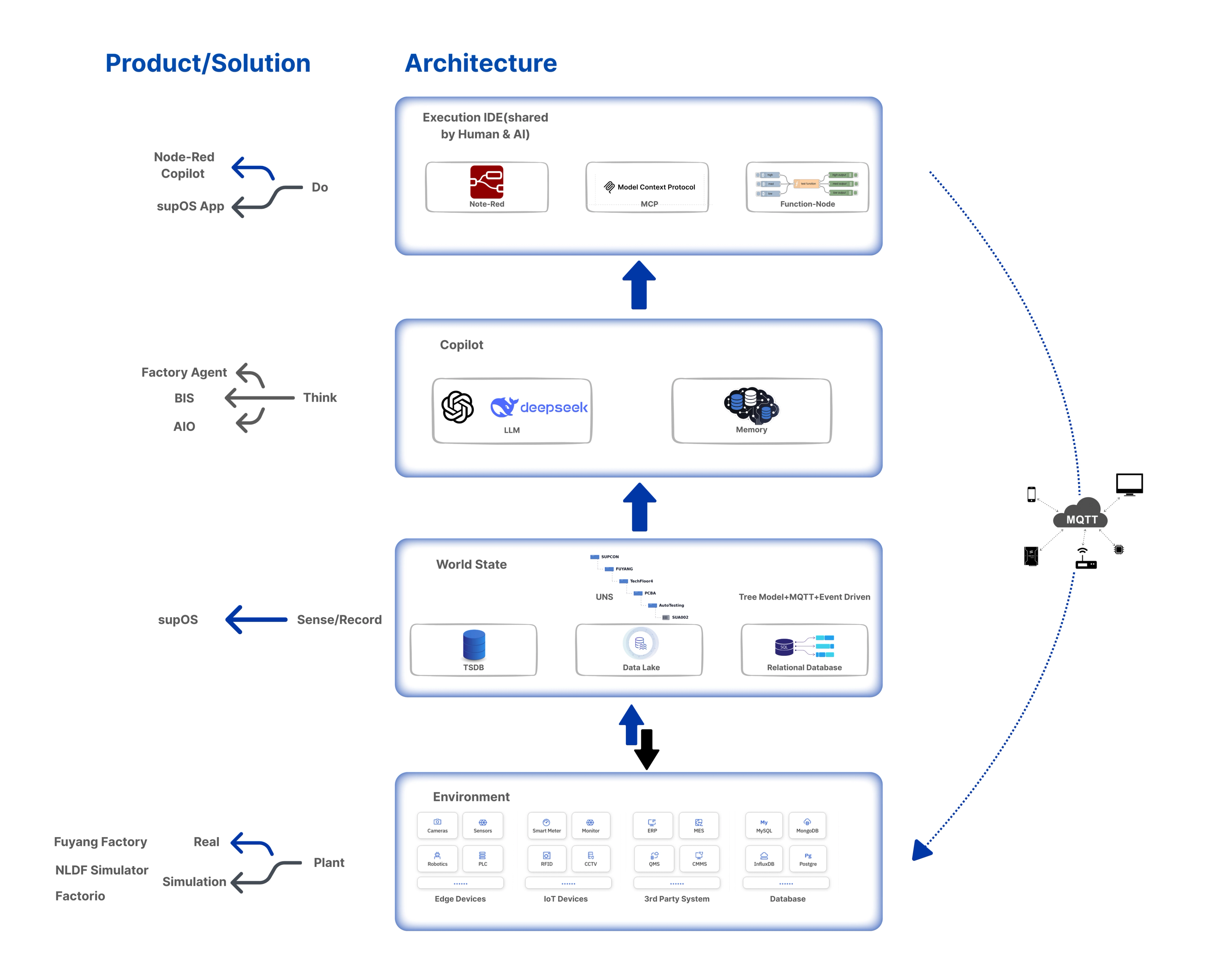

Factory Agent is our attempt to sketch that possibility:

A reasoning LLM agent system, running on Node-RED, listening to real-time MQTT topics, and making decisions for a simulated manufacturing factory

It’s open-source, event-driven, and experimental.

Not because we have all the answers — but because this space is too exciting not to explore.

Why Industrial AI Needs Decision-Making Agents

The industrial world is full of data. But decision-making — especially at the edge — still relies heavily on human intuition and manual rules.

Today’s AI applications in industry often remain passive: they answer questions, summarize documents, or assist with interfaces. But very few actually make decisions, execute commands, and take accountability for outcomes in a closed-loop way.

Our question was simple:

Can we build an agent system that senses factory events, reasons about possible strategies, and executes actions — like a junior operator empowered with expert instincts?

To explore this, we started with a minimal viable system — and we are sharing it open-source to invite the broader community to build, extend, and challenge it.

Building the Closed-Loop Control System

In classical control theory, a closed-loop controller continuously monitors outputs and feeds information back into the system to adjust behavior — enabling self-correction even under changing conditions (e.g., PID loops in process control).

We extend this paradigm into the LLM-agent world.

The loop now operates across five essential layers:

Perception Layer

- Connects to factory OT/IT data sources.

- Uses an MQTT-based Unified Namespace (UNS) to organize real-time data into semantically meaningful topics.

- Each agent "perceives" the factory world through selected MQTT topics.

Reasoning & Decision-Making Layer (Agent Layer)

- Each Agent is an independent LLM-powered unit.

- Possesses its own prompts, goals, and (in the future) memory modules.

- Each agent is an independent LLM with its own prompts and memory. It thinks, plans, and triggers action when needed.

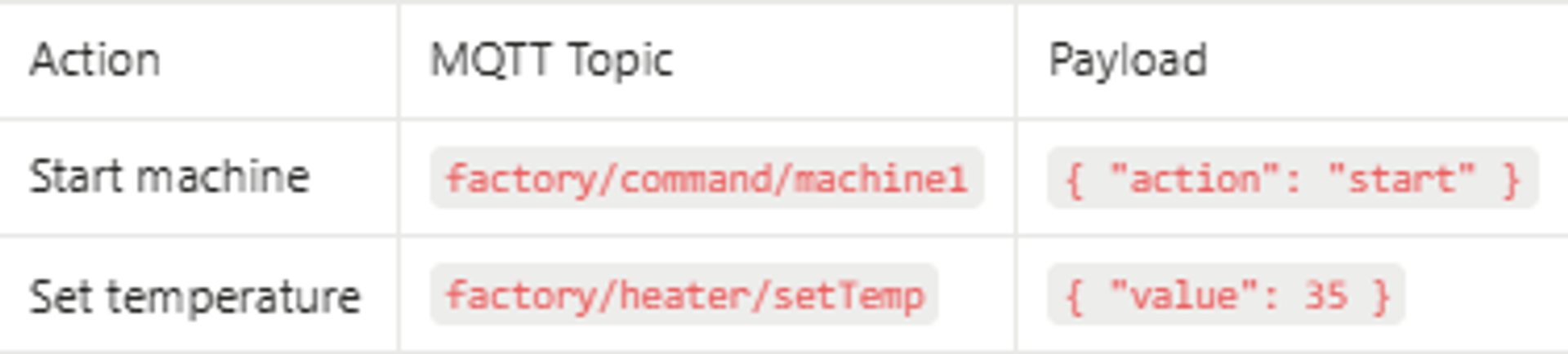

Action Dispatch Layer

- Agent outputs are transformed into structured JSON commands.

- These are published via MQTT to control topics.

- Each command is addressed to the corresponding system component (e.g., machine, heater, scheduler).

Execution Layer (Environment/Plant)

- The (real or simulated) plant executes actions.

- Resulting changes are published back into MQTT, closing the feedback loop.

Feedback & Learning Layer

- Monitors action results.

- Prepares for future learning mechanisms: working memory updates, action scoring, strategy tracebacks.

This forms a full LLM-driven realization of the classical “observe–plan–act–observe” loop — now expanded to support distributed, evolving agents.

A Hands-on Walkthrough

Architecture Overview

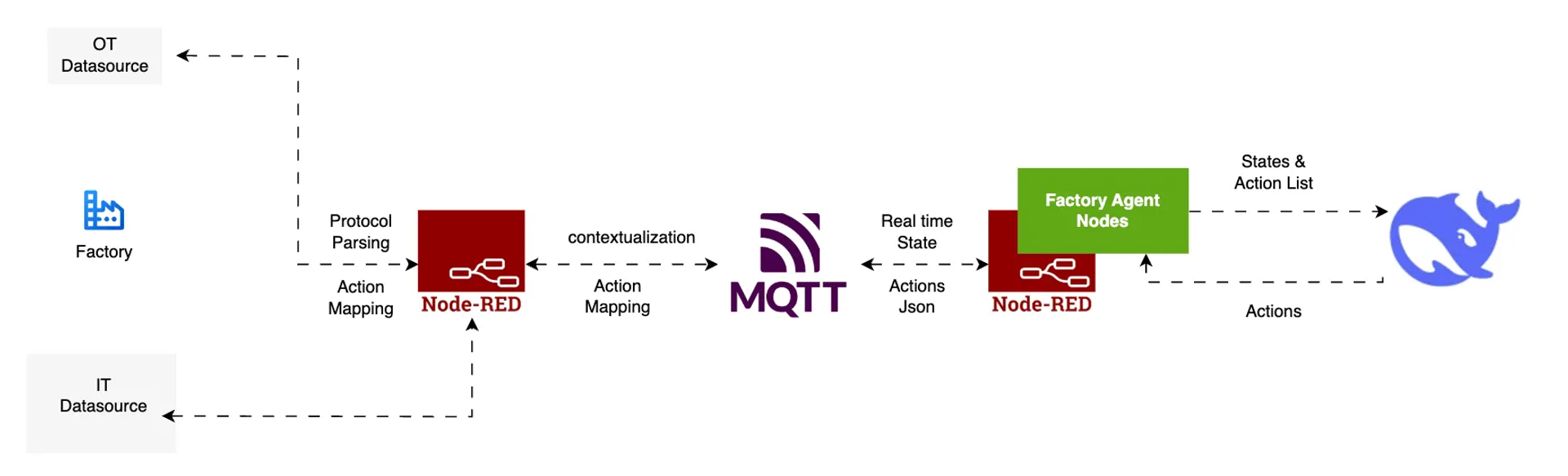

The system integrates four key components:

- Node-RED — Real-time orchestration of message flows between agents, topics, and execution systems.

- MQTT Broker — A lightweight event-driven backbone that enables a Unified Namespace (UNS) structure.

- Factory Agent Nodes — Specialized Node-RED nodes managing perception (state input), reasoning prompts, and LLM action generation.

- LLMs (e.g., DeepSeek, Gemini) — Perform real-time reasoning, planning, and decision-making based on observed context.

Each LLM instance receives structured states from the MQTT topics, reasons about possible strategies, and outputs control instructions that are routed back through MQTT.

Each control instruction is published in a topic-payload format. For example:

Try It Yourself: Factory Agent and Virtual Factory Simulation

We have made both the Factory Agent modules and the virtual factory simulation fully open-source, enabling anyone to explore and build intelligent, closed-loop control systems.

With the virtual factory, you can:

- Deploy a simulated manufacturing environment that publishes realistic real-time MQTT topics.

- Emulate disruptions, resource constraints, production dynamics, and more.

- Safely and iteratively test your Factory Agent configurations, strategies, and decision-making logic.

By connecting Factory Agent nodes, you can:

- Listen to real-time factory events.

- Reason about operational strategies using LLMs.

- Act by sending control instructions back to the factory system — completing the observe-plan-act-observe loop.

📂 Explore the source code and quickstart guide:

What’s Next: Memory, Multi-Agent, and the Future of Thinking Factories

This is only the beginning. The next steps will enhance both the architecture and internal components:

- From single-agent scheduling to multi-agent collaboration

- Support for more LLMs, allowing seamless integration of future models

- Tool access for agents

- Connecting agents to numerical solvers, ML models, external APIs

- Enhanced virtual factory simulation, enabling richer and more diverse test scenarios

- Memory integration

- Working memory (context window control)

- Episodic memory (decision history)

- Procedural memory (reusable strategies)

- Semantic Memory(public knowledge)

- Feedback loop enhancements

- Action scoring, feedback-aware iteration

We’re building more than a product — we’re crafting a research playground to rethink how factories might reason, adapt, and collaborate.

Let’s build thinking factories together.